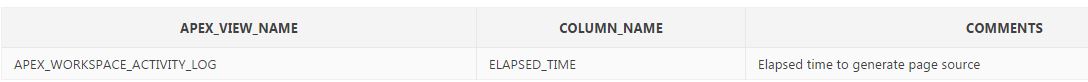

Trying to get a better understanding of what elapsed_time is to use it in custom charts to show our highest-used APEX apps.

The description in the apex_dictionary is "Elapsed time to generate page source"

So, if you were to sum the elapsed_time for a certain app and time period, this would be the sum of all the times any page in the app was fully loaded (in seconds)?

Thank you!